Probability of Failure Estimation with Gaussian Processes

!pip install botorch -U

Requirement already satisfied: botorch in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (0.9.2)

Requirement already satisfied: linear-operator==0.5.1 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (0.5.1)

Requirement already satisfied: multipledispatch in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (1.0.0)

Requirement already satisfied: gpytorch==1.11 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (1.11)

Requirement already satisfied: pyro-ppl>=1.8.4 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (1.8.6)

Requirement already satisfied: torch>=1.13.1 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (2.0.1)

Requirement already satisfied: scipy in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from botorch) (1.9.3)

Requirement already satisfied: scikit-learn in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from gpytorch==1.11->botorch) (1.3.0)

Requirement already satisfied: typeguard~=2.13.3 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from linear-operator==0.5.1->botorch) (2.13.3)

Requirement already satisfied: jaxtyping>=0.2.9 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from linear-operator==0.5.1->botorch) (0.2.20)

Requirement already satisfied: numpy>=1.7 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from pyro-ppl>=1.8.4->botorch) (1.23.4)

Requirement already satisfied: pyro-api>=0.1.1 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from pyro-ppl>=1.8.4->botorch) (0.1.2)

Requirement already satisfied: opt-einsum>=2.3.2 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from pyro-ppl>=1.8.4->botorch) (3.3.0)

Requirement already satisfied: tqdm>=4.36 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from pyro-ppl>=1.8.4->botorch) (4.66.1)

Requirement already satisfied: networkx in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from torch>=1.13.1->botorch) (3.1)

Requirement already satisfied: typing-extensions in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from torch>=1.13.1->botorch) (4.7.1)

Requirement already satisfied: filelock in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from torch>=1.13.1->botorch) (3.12.2)

Requirement already satisfied: jinja2 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from torch>=1.13.1->botorch) (3.1.2)

Requirement already satisfied: sympy in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from torch>=1.13.1->botorch) (1.12)

Requirement already satisfied: MarkupSafe>=2.0 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from jinja2->torch>=1.13.1->botorch) (2.1.3)

Requirement already satisfied: joblib>=1.1.1 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from scikit-learn->gpytorch==1.11->botorch) (1.3.1)

Requirement already satisfied: threadpoolctl>=2.0.0 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from scikit-learn->gpytorch==1.11->botorch) (3.2.0)

Requirement already satisfied: mpmath>=0.19 in /Users/alegresor/miniconda3/envs/qmcpy/lib/python3.9/site-packages (from sympy->torch>=1.13.1->botorch) (1.3.0)

import qmcpy as qp

import gpytorch

import torch

import os

import warnings

import pandas as pd

from gpytorch.utils.warnings import NumericalWarning

warnings.filterwarnings("ignore")

pd.set_option(

'display.max_rows', None,

'display.max_columns', None,

'display.width', 1000,

'display.colheader_justify', 'center',

'display.precision',2,

'display.float_format',lambda x:'%.1e'%x)

from matplotlib import pyplot

pyplot.style.use("../qmcpy/qmcpy.mplstyle")

!pip install botorch -U --quiet

import qmcpy as qp

import pandas as pd

pd.set_option(

'display.max_rows', None,

'display.max_columns', None,

'display.width', 1000,

'display.colheader_justify', 'center',

'display.precision',2,

'display.float_format',lambda x:'%.1e'%x)

from matplotlib import pyplot

pyplot.style.use("../qmcpy/qmcpy.mplstyle")

gpytorch_use_gpu = torch.cuda.is_available()

gpytorch_use_gpu

False

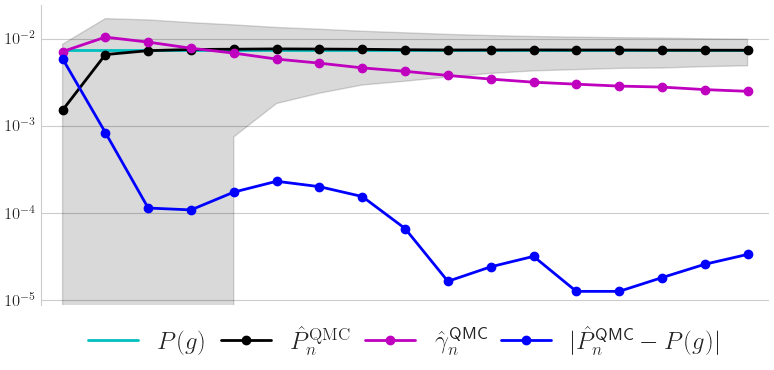

Sin 1d Problem

mcispfgp = qp.PFGPCI(

integrand = qp.Sin1d(qp.DigitalNetB2(1,seed=17),k=3),

failure_threshold = 0,

failure_above_threshold=True,

abs_tol = 1e-2,

alpha = 1e-1,

n_init = 4,

init_samples = None,

batch_sampler = qp.PFSampleErrorDensityAR(verbose=True),

n_batch = 4,

n_max = 20,

n_approx = 2**18,

gpytorch_prior_mean = gpytorch.means.ZeroMean(),

gpytorch_prior_cov = gpytorch.kernels.ScaleKernel(

gpytorch.kernels.MaternKernel(nu=2.5,

lengthscale_constraint = gpytorch.constraints.Interval(.01,.1)

),

outputscale_constraint = gpytorch.constraints.Interval(1e-3,10)

),

gpytorch_likelihood = gpytorch.likelihoods.GaussianLikelihood(noise_constraint = gpytorch.constraints.Interval(1e-12,1e-8)),

gpytorch_marginal_log_likelihood_func = lambda likelihood,gpyt_model: gpytorch.mlls.ExactMarginalLogLikelihood(likelihood,gpyt_model),

torch_optimizer_func = lambda gpyt_model: torch.optim.Adam(gpyt_model.parameters(),lr=0.1),

gpytorch_train_iter = 100,

gpytorch_use_gpu = False,

verbose = 50,

n_ref_approx = 2**22,

seed_ref_approx = None)

solution,data = mcispfgp.integrate(seed=7,refit=False)

print(data)

df = pd.DataFrame(data.get_results_dict())

print("\nIteration Summary")

print(df)

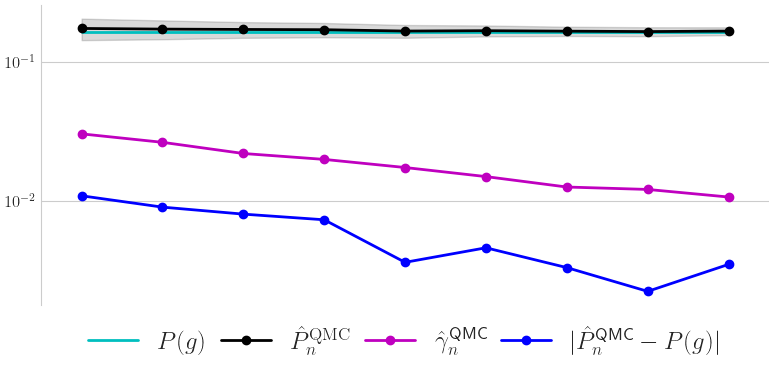

data.plot();

reference approximation with d=1: 0.5000002384185791

batch 0

gpytorch model fitting

iter 50 of 100

likelihood.noise_covar.raw_noise.................. -8.63e-02

covar_module.raw_outputscale...................... -3.04e+00

covar_module.base_kernel.raw_lengthscale.......... 5.04e+00

iter 100 of 100

likelihood.noise_covar.raw_noise.................. -1.01e-01

covar_module.raw_outputscale...................... -2.96e+00

covar_module.base_kernel.raw_lengthscale.......... 6.29e+00

batch 1

AR sampling with efficiency 2.6e-01, expect 15 draws: 12, 15, 18, 21, 24,

batch 2

AR sampling with efficiency 1.7e-01, expect 23 draws: 16, 20,

batch 3

AR sampling with efficiency 1.3e-01, expect 30 draws: 20, 25,

batch 4

AR sampling with efficiency 6.1e-02, expect 65 draws: 48, 72,

PFGPCIData (AccumulateData Object)

solution 0.500

error_bound 0.073

bound_low 0.426

bound_high 0.573

n_total 20

time_integrate 0.644

PFGPCI (StoppingCriterion Object)

Sin1d (Integrand Object)

Uniform (TrueMeasure Object)

lower_bound 0

upper_bound 18.850

DigitalNetB2 (DiscreteDistribution Object)

d 1

dvec 0

randomize LMS_DS

graycode 0

entropy 17

spawn_key ()

Iteration Summary

n_sum n_batch error_bounds ci_low ci_high solutions solutions_ref error_ref in_ci

0 4 4 5.1e-01 0.0e+00 1.0e+00 4.9e-01 5.0e-01 1.4e-02 True

1 8 4 5.0e-01 0.0e+00 1.0e+00 5.0e-01 5.0e-01 2.2e-03 True

2 12 4 5.2e-01 0.0e+00 1.0e+00 4.8e-01 5.0e-01 1.6e-02 True

3 16 4 3.0e-01 2.1e-01 8.1e-01 5.1e-01 5.0e-01 1.0e-02 True

4 20 4 7.3e-02 4.3e-01 5.7e-01 5.0e-01 5.0e-01 4.7e-04 True

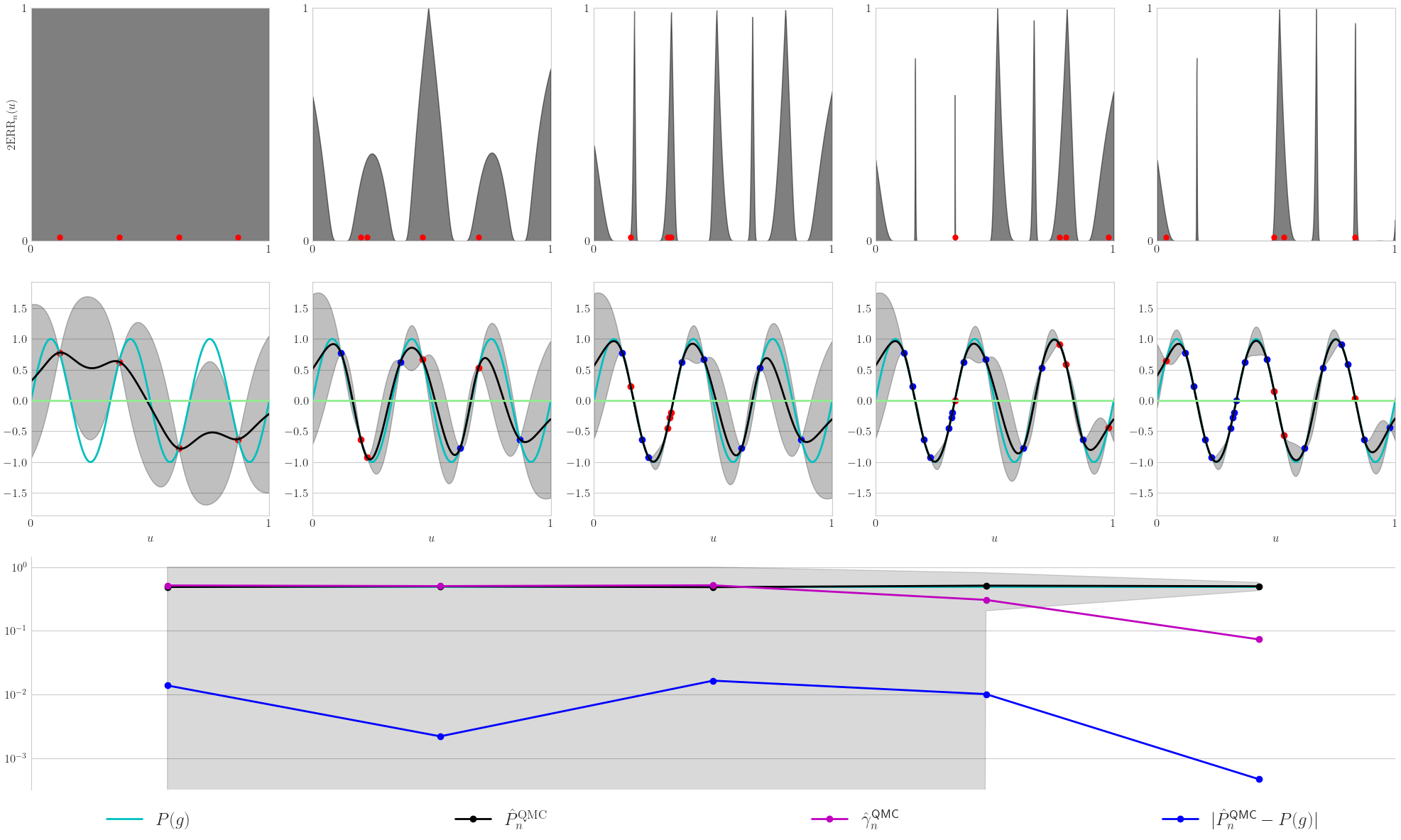

Multimodal 2d Problem

mcispfgp = qp.PFGPCI(

integrand = qp.Multimodal2d(qp.DigitalNetB2(2,seed=17)),

failure_threshold = 0,

failure_above_threshold=True,

abs_tol = 1e-2,

alpha = 1e-1,

n_init = 64,

init_samples = None,

batch_sampler = qp.PFSampleErrorDensityAR(verbose=True),

n_batch = 16,

n_max = 128,

n_approx = 2**18,

gpytorch_prior_mean = gpytorch.means.ZeroMean(),

gpytorch_prior_cov = gpytorch.kernels.ScaleKernel(

gpytorch.kernels.MaternKernel(nu=1.5,

lengthscale_constraint = gpytorch.constraints.Interval(.1,1)

),

outputscale_constraint = gpytorch.constraints.Interval(1e-3,.5)

),

gpytorch_likelihood = gpytorch.likelihoods.GaussianLikelihood(noise_constraint = gpytorch.constraints.Interval(1e-12,1e-8)),

gpytorch_marginal_log_likelihood_func = lambda likelihood,gpyt_model: gpytorch.mlls.ExactMarginalLogLikelihood(likelihood,gpyt_model),

torch_optimizer_func = lambda gpyt_model: torch.optim.Adam(gpyt_model.parameters(),lr=0.1),

gpytorch_train_iter = 800,

gpytorch_use_gpu = gpytorch_use_gpu,

verbose = 200,

n_ref_approx = 2**22,

seed_ref_approx = None)

solution,data = mcispfgp.integrate(seed=7,refit=False)

print(data)

df = pd.DataFrame(data.get_results_dict())

print("\nIteration Summary")

print(df)

data.plot();

reference approximation with d=2: 0.3020772933959961

batch 0

gpytorch model fitting

iter 200 of 800

likelihood.noise_covar.raw_noise.................. 1.45e+00

covar_module.raw_outputscale...................... 2.92e+00

covar_module.base_kernel.raw_lengthscale.......... -3.02e+00

iter 400 of 800

likelihood.noise_covar.raw_noise.................. 1.49e+00

covar_module.raw_outputscale...................... 3.70e+00

covar_module.base_kernel.raw_lengthscale.......... -3.32e+00

iter 600 of 800

likelihood.noise_covar.raw_noise.................. 1.53e+00

covar_module.raw_outputscale...................... 4.24e+00

covar_module.base_kernel.raw_lengthscale.......... -3.43e+00

iter 800 of 800

likelihood.noise_covar.raw_noise.................. 1.58e+00

covar_module.raw_outputscale...................... 4.66e+00

covar_module.base_kernel.raw_lengthscale.......... -3.48e+00

batch 1

AR sampling with efficiency 7.6e-02, expect 211 draws: 144, 198,

batch 2

AR sampling with efficiency 3.8e-02, expect 416 draws: 288,

batch 3

AR sampling with efficiency 2.8e-02, expect 581 draws: 400, 500, 525,

batch 4

AR sampling with efficiency 2.0e-02, expect 785 draws: 544, 748, 816, 884,

PFGPCIData (AccumulateData Object)

solution 0.298

error_bound 0.079

bound_low 0.219

bound_high 0.377

n_total 128

time_integrate 4.295

PFGPCI (StoppingCriterion Object)

Multimodal2d (Integrand Object)

Uniform (TrueMeasure Object)

lower_bound [-4 -3]

upper_bound [7 8]

DigitalNetB2 (DiscreteDistribution Object)

d 2^(1)

dvec [0 1]

randomize LMS_DS

graycode 0

entropy 17

spawn_key ()

Iteration Summary

n_sum n_batch error_bounds ci_low ci_high solutions solutions_ref error_ref in_ci

0 64 64 3.8e-01 0.0e+00 6.7e-01 3.0e-01 3.0e-01 5.7e-03 True

1 80 16 1.9e-01 8.1e-02 4.7e-01 2.7e-01 3.0e-01 2.8e-02 True

2 96 16 1.4e-01 1.5e-01 4.2e-01 2.9e-01 3.0e-01 1.5e-02 True

3 112 16 1.0e-01 2.0e-01 4.0e-01 3.0e-01 3.0e-01 4.5e-03 True

4 128 16 7.9e-02 2.2e-01 3.8e-01 3.0e-01 3.0e-01 4.0e-03 True

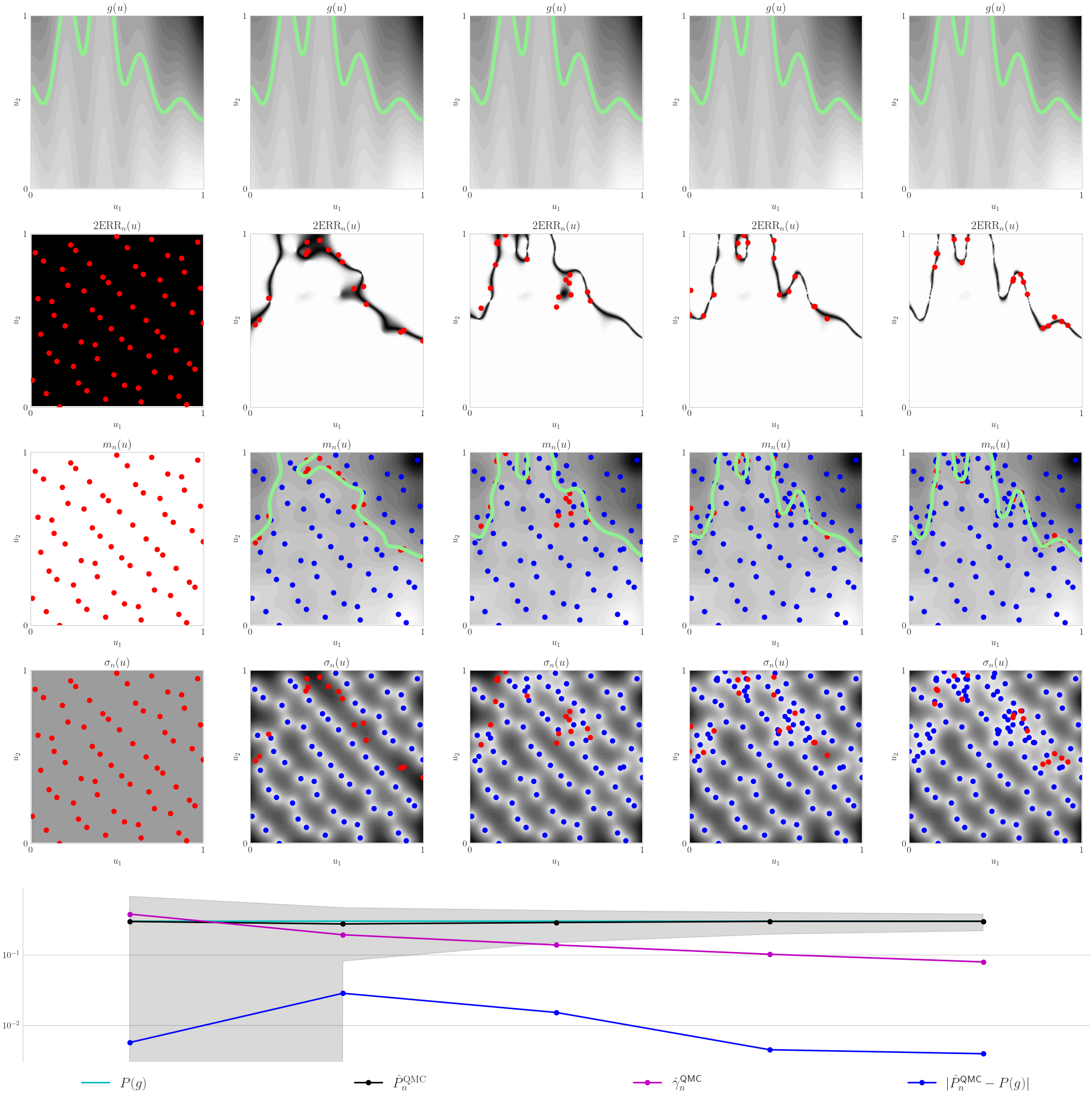

Four Branch 2d Problem

mcispfgp = qp.PFGPCI(

integrand = qp.FourBranch2d(qp.DigitalNetB2(2,seed=17)),

failure_threshold = 0,

failure_above_threshold=True,

abs_tol = 1e-2,

alpha = 1e-1,

n_init = 64,

init_samples = None,

batch_sampler = qp.PFSampleErrorDensityAR(verbose=True),

n_batch = 12,

n_max = 200,

n_approx = 2**18,

gpytorch_prior_mean = gpytorch.means.ZeroMean(),

gpytorch_prior_cov = gpytorch.kernels.ScaleKernel(

gpytorch.kernels.MaternKernel(nu=1.5,

lengthscale_constraint = gpytorch.constraints.Interval(.5,1)

),

outputscale_constraint = gpytorch.constraints.Interval(1e-8,.5)

),

gpytorch_likelihood = gpytorch.likelihoods.GaussianLikelihood(noise_constraint = gpytorch.constraints.Interval(1e-12,1e-8)),

gpytorch_marginal_log_likelihood_func = lambda likelihood,gpyt_model: gpytorch.mlls.ExactMarginalLogLikelihood(likelihood,gpyt_model),

torch_optimizer_func = lambda gpyt_model: torch.optim.Adam(gpyt_model.parameters(),lr=0.1),

gpytorch_train_iter = 800,

gpytorch_use_gpu = gpytorch_use_gpu,

verbose = 200,

n_ref_approx = 2**22,

seed_ref_approx = None)

solution,data = mcispfgp.integrate(seed=7,refit=False)

print(data)

df = pd.DataFrame(data.get_results_dict())

print("\nIteration Summary")

print(df)

data.plot();

reference approximation with d=2: 0.20872807502746582

batch 0

gpytorch model fitting

iter 200 of 800

likelihood.noise_covar.raw_noise.................. 2.89e+00

covar_module.raw_outputscale...................... 3.88e+00

covar_module.base_kernel.raw_lengthscale.......... -4.50e+00

iter 400 of 800

likelihood.noise_covar.raw_noise.................. 3.63e+00

covar_module.raw_outputscale...................... 4.78e+00

covar_module.base_kernel.raw_lengthscale.......... -5.44e+00

iter 600 of 800

likelihood.noise_covar.raw_noise.................. 4.17e+00

covar_module.raw_outputscale...................... 5.36e+00

covar_module.base_kernel.raw_lengthscale.......... -6.04e+00

iter 800 of 800

likelihood.noise_covar.raw_noise.................. 4.59e+00

covar_module.raw_outputscale...................... 5.80e+00

covar_module.base_kernel.raw_lengthscale.......... -6.48e+00

batch 1

AR sampling with efficiency 9.0e-03, expect 1337 draws: 924, 1232,

batch 2

AR sampling with efficiency 4.9e-03, expect 2461 draws: 1704, 2556,

batch 3

AR sampling with efficiency 3.7e-03, expect 3250 draws: 2256,

batch 4

AR sampling with efficiency 2.5e-03, expect 4858 draws: 3372, 3653, 3934,

PFGPCIData (AccumulateData Object)

solution 0.207

error_bound 0.009

bound_low 0.198

bound_high 0.217

n_total 112

time_integrate 4.376

PFGPCI (StoppingCriterion Object)

FourBranch2d (Integrand Object)

Uniform (TrueMeasure Object)

lower_bound -8

upper_bound 2^(3)

DigitalNetB2 (DiscreteDistribution Object)

d 2^(1)

dvec [0 1]

randomize LMS_DS

graycode 0

entropy 17

spawn_key ()

Iteration Summary

n_sum n_batch error_bounds ci_low ci_high solutions solutions_ref error_ref in_ci

0 64 64 4.5e-02 1.6e-01 2.5e-01 2.0e-01 2.1e-01 3.8e-03 True

1 76 12 2.4e-02 1.9e-01 2.3e-01 2.1e-01 2.1e-01 1.4e-03 True

2 88 12 1.8e-02 1.9e-01 2.3e-01 2.1e-01 2.1e-01 1.0e-03 True

3 100 12 1.2e-02 1.9e-01 2.2e-01 2.1e-01 2.1e-01 1.6e-03 True

4 112 12 9.4e-03 2.0e-01 2.2e-01 2.1e-01 2.1e-01 1.4e-03 True

Ishigami 3d Problem

mcispfgp = qp.PFGPCI(

integrand = qp.Ishigami(qp.DigitalNetB2(3,seed=17)),

failure_threshold = 0,

failure_above_threshold=False,

abs_tol = 1e-2,

alpha = 1e-1,

n_init = 128,

init_samples = None,

batch_sampler = qp.PFSampleErrorDensityAR(verbose=True),

n_batch = 16,

n_max = 256,

n_approx = 2**18,

gpytorch_prior_mean = gpytorch.means.ZeroMean(),

gpytorch_prior_cov = gpytorch.kernels.ScaleKernel(

gpytorch.kernels.MaternKernel(nu=2.5,

lengthscale_constraint = gpytorch.constraints.Interval(.5,1)

),

outputscale_constraint = gpytorch.constraints.Interval(1e-8,.5)

),

gpytorch_likelihood = gpytorch.likelihoods.GaussianLikelihood(noise_constraint = gpytorch.constraints.Interval(1e-12,1e-8)),

gpytorch_marginal_log_likelihood_func = lambda likelihood,gpyt_model: gpytorch.mlls.ExactMarginalLogLikelihood(likelihood,gpyt_model),

torch_optimizer_func = lambda gpyt_model: torch.optim.Adam(gpyt_model.parameters(),lr=0.1),

gpytorch_train_iter = 800,

gpytorch_use_gpu = gpytorch_use_gpu,

verbose = 200,

n_ref_approx = 2**22,

seed_ref_approx = None)

solution,data = mcispfgp.integrate(seed=7,refit=False)

print(data)

df = pd.DataFrame(data.get_results_dict())

print("\nIteration Summary")

print(df)

data.plot();

reference approximation with d=3: 0.16239547729492188

batch 0

gpytorch model fitting

iter 200 of 800

likelihood.noise_covar.raw_noise.................. 2.18e+00

covar_module.raw_outputscale...................... 3.43e+00

covar_module.base_kernel.raw_lengthscale.......... -4.09e+00

iter 400 of 800

likelihood.noise_covar.raw_noise.................. 2.66e+00

covar_module.raw_outputscale...................... 4.26e+00

covar_module.base_kernel.raw_lengthscale.......... -4.99e+00

iter 600 of 800

likelihood.noise_covar.raw_noise.................. 3.08e+00

covar_module.raw_outputscale...................... 4.81e+00

covar_module.base_kernel.raw_lengthscale.......... -5.56e+00

iter 800 of 800

likelihood.noise_covar.raw_noise.................. 3.43e+00

covar_module.raw_outputscale...................... 5.24e+00

covar_module.base_kernel.raw_lengthscale.......... -6.00e+00

batch 1

AR sampling with efficiency 6.1e-03, expect 2626 draws: 1824,

batch 2

AR sampling with efficiency 5.3e-03, expect 3019 draws: 2096, 3013, 3668, 3930, 4061, 4192, 4323, 4454, 4585,

batch 3

AR sampling with efficiency 4.4e-03, expect 3631 draws: 2512, 4082, 4710, 4867, 5024,

batch 4

AR sampling with efficiency 4.0e-03, expect 4003 draws: 2784,

batch 5

AR sampling with efficiency 3.5e-03, expect 4576 draws: 3168, 4158, 4554, 4950,

batch 6

AR sampling with efficiency 3.0e-03, expect 5315 draws: 3680, 4600, 5060, 5520,

batch 7

AR sampling with efficiency 2.5e-03, expect 6313 draws: 4384,

batch 8

AR sampling with efficiency 2.4e-03, expect 6570 draws: 4560, 5985, 6270, 6555, 6840, 7125, 7410,

PFGPCIData (AccumulateData Object)

solution 0.166

error_bound 0.011

bound_low 0.155

bound_high 0.177

n_total 256

time_integrate 14.351

PFGPCI (StoppingCriterion Object)

Ishigami (Integrand Object)

Uniform (TrueMeasure Object)

lower_bound -3.142

upper_bound 3.142

DigitalNetB2 (DiscreteDistribution Object)

d 3

dvec [0 1 2]

randomize LMS_DS

graycode 0

entropy 17

spawn_key ()

Iteration Summary

n_sum n_batch error_bounds ci_low ci_high solutions solutions_ref error_ref in_ci

0 128 128 3.0e-02 1.4e-01 2.0e-01 1.7e-01 1.6e-01 1.1e-02 True

1 144 16 2.6e-02 1.4e-01 2.0e-01 1.7e-01 1.6e-01 9.1e-03 True

2 160 16 2.2e-02 1.5e-01 1.9e-01 1.7e-01 1.6e-01 8.1e-03 True

3 176 16 2.0e-02 1.5e-01 1.9e-01 1.7e-01 1.6e-01 7.4e-03 True

4 192 16 1.7e-02 1.5e-01 1.8e-01 1.7e-01 1.6e-01 3.7e-03 True

5 208 16 1.5e-02 1.5e-01 1.8e-01 1.7e-01 1.6e-01 4.7e-03 True

6 224 16 1.3e-02 1.5e-01 1.8e-01 1.7e-01 1.6e-01 3.4e-03 True

7 240 16 1.2e-02 1.5e-01 1.8e-01 1.6e-01 1.6e-01 2.3e-03 True

8 256 16 1.1e-02 1.6e-01 1.8e-01 1.7e-01 1.6e-01 3.5e-03 True

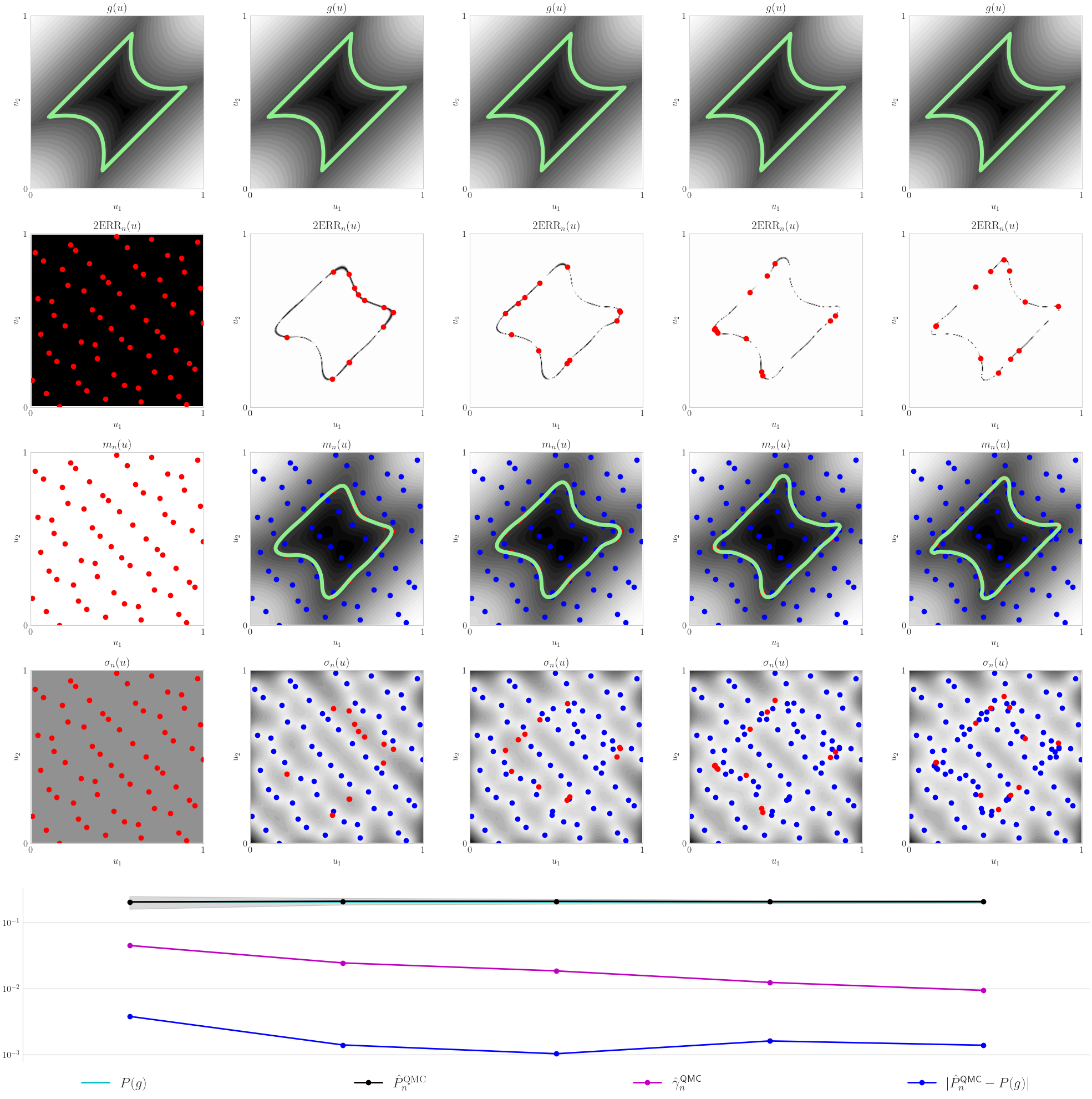

Hartmann 6d Problem

mcispfgp = qp.PFGPCI(

integrand = qp.Hartmann6d(qp.DigitalNetB2(6,seed=17)),

failure_threshold = -2,

failure_above_threshold=False,

abs_tol = 2.5e-3,

alpha = 1e-1,

n_init = 512,

init_samples = None,

batch_sampler = qp.PFSampleErrorDensityAR(verbose=True),

n_batch = 64,

n_max = 2500,

n_approx = 2**18,

gpytorch_prior_mean = gpytorch.means.ZeroMean(),

gpytorch_prior_cov = gpytorch.kernels.ScaleKernel(gpytorch.kernels.MaternKernel(nu=2.5)),

gpytorch_likelihood = gpytorch.likelihoods.GaussianLikelihood(noise_constraint = gpytorch.constraints.Interval(1e-12,1e-8)),

gpytorch_marginal_log_likelihood_func = lambda likelihood,gpyt_model: gpytorch.mlls.ExactMarginalLogLikelihood(likelihood,gpyt_model),

torch_optimizer_func = lambda gpyt_model: torch.optim.Adam(gpyt_model.parameters(),lr=0.1),

gpytorch_train_iter = 150,

gpytorch_use_gpu = gpytorch_use_gpu,

verbose = 50,

n_ref_approx = 2**23,

seed_ref_approx = None)

solution,data = mcispfgp.integrate(seed=7,refit=False)

print(data)

df = pd.DataFrame(data.get_results_dict())

print("\nIteration Summary")

print(df)

data.plot();

reference approximation with d=6: 0.007387995719909668

batch 0

gpytorch model fitting

iter 50 of 150

likelihood.noise_covar.raw_noise.................. 1.65e+00

covar_module.raw_outputscale...................... 5.53e-01

covar_module.base_kernel.raw_lengthscale.......... 2.79e-01

iter 100 of 150

likelihood.noise_covar.raw_noise.................. 2.80e+00

covar_module.raw_outputscale...................... 5.10e-01

covar_module.base_kernel.raw_lengthscale.......... 2.67e-01

iter 150 of 150

likelihood.noise_covar.raw_noise.................. 3.38e+00

covar_module.raw_outputscale...................... 5.14e-01

covar_module.base_kernel.raw_lengthscale.......... 2.68e-01

batch 1

AR sampling with efficiency 1.4e-03, expect 45089 draws: 31232, 35624,

batch 2

AR sampling with efficiency 2.1e-03, expect 30750 draws: 21312, 27306, 29304, 30969, 31635, 32301,

batch 3

AR sampling with efficiency 1.8e-03, expect 35103 draws: 24320, 32680, 36860, 38000,

batch 4

AR sampling with efficiency 1.6e-03, expect 41262 draws: 28608, 40230, 41571,

batch 5

AR sampling with efficiency 1.4e-03, expect 46939 draws: 32576, 43265, 47846, 50900,

batch 6

AR sampling with efficiency 1.2e-03, expect 54986 draws: 38144, 51852, 53044, 54236, 54832,

batch 7

AR sampling with efficiency 1.0e-03, expect 61209 draws: 42432, 59670, 66300, 68289, 69615, 70278,

batch 8

AR sampling with efficiency 9.2e-04, expect 69388 draws: 48128, 63168, 65424, 66176, 66928, 67680, 68432,

batch 9

AR sampling with efficiency 8.4e-04, expect 75933 draws: 52672, 66663, 71601, 73247,

batch 10

AR sampling with efficiency 7.6e-04, expect 84744 draws: 58752, 80784, 89046,

batch 11

AR sampling with efficiency 6.9e-04, expect 93317 draws: 64704, 93012, 99078, 101100, 103122, 105144,

batch 12

AR sampling with efficiency 6.3e-04, expect 101312 draws: 70208, 95439, 103118, 105312, 107506,

batch 13

AR sampling with efficiency 6.0e-04, expect 106776 draws: 74048, 87932, 92560,

batch 14

AR sampling with efficiency 5.7e-04, expect 112270 draws: 77824, 98496, 100928, 102144,

batch 15

AR sampling with efficiency 5.6e-04, expect 115250 draws: 79872, 113568, 124800, 126048, 127296, 128544, 129792, 131040, 132288, 133536, 134784,

batch 16

AR sampling with efficiency 5.2e-04, expect 123202 draws: 85376, 88044, 89378, 90712,

PFGPCIData (AccumulateData Object)

solution 0.007

error_bound 0.002

bound_low 0.005

bound_high 0.010

n_total 1536

time_integrate 250.998

PFGPCI (StoppingCriterion Object)

Hartmann6d (Integrand Object)

Uniform (TrueMeasure Object)

lower_bound 0

upper_bound 1

DigitalNetB2 (DiscreteDistribution Object)

d 6

dvec [0 1 2 3 4 5]

randomize LMS_DS

graycode 0

entropy 17

spawn_key ()

Iteration Summary

n_sum n_batch error_bounds ci_low ci_high solutions solutions_ref error_ref in_ci

0 512 512 7.1e-03 0.0e+00 8.6e-03 1.5e-03 7.4e-03 5.9e-03 True

1 576 64 1.0e-02 0.0e+00 1.7e-02 6.6e-03 7.4e-03 8.3e-04 True

2 640 64 9.1e-03 0.0e+00 1.6e-02 7.3e-03 7.4e-03 1.1e-04 True

3 704 64 7.8e-03 0.0e+00 1.5e-02 7.5e-03 7.4e-03 1.1e-04 True

4 768 64 6.8e-03 7.4e-04 1.4e-02 7.6e-03 7.4e-03 1.7e-04 True

5 832 64 5.8e-03 1.8e-03 1.3e-02 7.6e-03 7.4e-03 2.3e-04 True

6 896 64 5.2e-03 2.4e-03 1.3e-02 7.6e-03 7.4e-03 2.0e-04 True

7 960 64 4.6e-03 2.9e-03 1.2e-02 7.5e-03 7.4e-03 1.5e-04 True

8 1024 64 4.2e-03 3.2e-03 1.2e-02 7.5e-03 7.4e-03 6.6e-05 True

9 1088 64 3.8e-03 3.6e-03 1.1e-02 7.4e-03 7.4e-03 1.6e-05 True

10 1152 64 3.4e-03 4.0e-03 1.1e-02 7.4e-03 7.4e-03 2.4e-05 True

11 1216 64 3.2e-03 4.3e-03 1.1e-02 7.4e-03 7.4e-03 3.2e-05 True

12 1280 64 3.0e-03 4.4e-03 1.0e-02 7.4e-03 7.4e-03 1.3e-05 True

13 1344 64 2.9e-03 4.6e-03 1.0e-02 7.4e-03 7.4e-03 1.3e-05 True

14 1408 64 2.8e-03 4.6e-03 1.0e-02 7.4e-03 7.4e-03 1.8e-05 True

15 1472 64 2.6e-03 4.8e-03 1.0e-02 7.4e-03 7.4e-03 2.6e-05 True

16 1536 64 2.5e-03 4.9e-03 9.8e-03 7.4e-03 7.4e-03 3.3e-05 True